| | | (hh:mm:ss) | Accuracy | Accuracy | Loss | Loss | Rate | | Epoch | Iteration | Time Elapsed | Mini-batch | Validation | Mini-batch | Validation | Base Learning | For more information, see Understand Network Predictions Using LIME. To understand the predictions of a trained image classification neural network, use imageLIME. This example uses lime (Statistics and Machine Learning Toolbox) and fit (Statistics and Machine Learning Toolbox) to generate a synthetic data set and fit a simple interpretable model to the synthetic data set. In training this interpretable model, synthetic observations are weighted by their distance from the query observation, so the explanation is "local" to that observation.

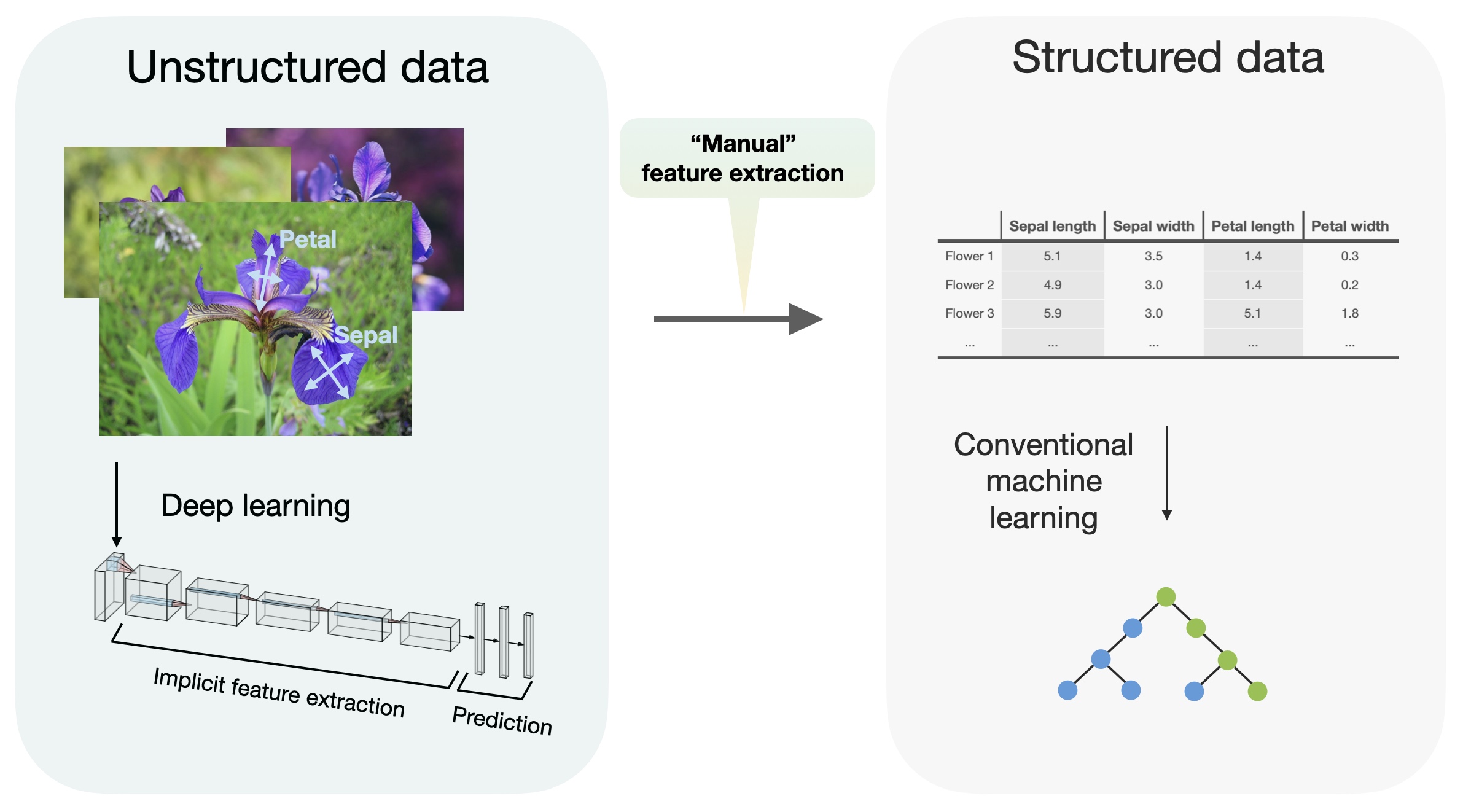

This simple model can be used to understand the importance of the top few features to the classification decision of the network. This synthetic data set is passed through the deep neural network to obtain a classification, and a simple, interpretable model is fitted. For a specified query observation, LIME generates a synthetic data set whose statistics for each feature match the real data set. In this example, you interpret a feature data classification network using LIME. You can use the LIME technique to understand which predictors are most important to the classification decision of a network. This example shows how to use the locally interpretable model-agnostic explanations (LIME) technique to understand the predictions of a deep neural network classifying tabular data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed